CeX3D Inverse is Hardcore Processing's upcoming new product, a computer program for automatically turning ordinary photographs into digital 3D models.

The picture below is an actual fully automatically generated 3D model from three images:

The model consists of more than 500,000 polygons (trigons) and was generated automatically in around 115 minutes from three 4M pixel (2048x1536) images without any prior or manual camera calibration. This 3D model only contains very few holes (mostly due to incomplete testing) and therefore represents a solid physical 3D object quite well. There are some noisy or wrongly reconstructed parts, so improving the reconstruction quality is still being worked on.

Some time ago,

CeX3D Inverse's critical TODO-list before alpha release (click here)

became available. One more point on that list is now complete,

along with other improvements, as described below.

Point Six: Support for OBJ and RIB Files

CeX3D Inverse is now able to generate OBJ files with UV-texture maps. The UV-maps are currently only projected from the first image. More complete UV-texture map support will be made later on, though the current UV-mapping looks fairly convincing when reconstructing models from a small number of photographs.

Until now, CeX3D Inverse has been generating RIB files, though only with vertex colours, rather than UV-texture maps. The RIB files will also be supporting UV-texture maps, like the OBJ files. This final feature of UV-texture mapping in RIB files is not yet complete. The rendering in the image from the above section uses only vertex colours, rather than UV-texture maps.

When the quality of the generated 3D models have improved a bit more,

an example OBJ file and RIB file of the model shown in the images

will be made available in a new gallery section

that is to appear soon on this website.

Multi-View Model Integration

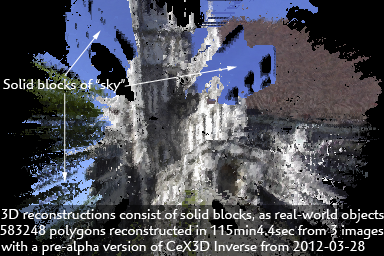

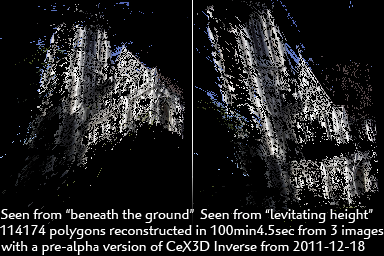

Development of support for OBJ files and UV-texture maps has not been the primary concern since the previous status update. Incorporation of estimated 3D (depth) data from all images into a single 3D model has been of much greather concern and required much more work than anticipated. The image shown here from 2011-12-18 actually only used 3D (depth) data from a single view:

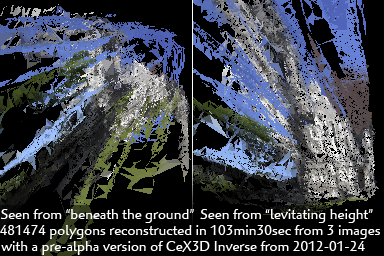

In order to get 3D data incorporated from all views, four (4) different technologies were developed. Three of those technologies have been subsequently discarded, since they were not good enough. Below is an image from 2012-01-24, showing one of the very advanced and promising technologies for multi-view 3D model integration that were developed but nevertheless had to be discarded:

The technology that we use now has the advantage of constructing

models that represent solid objects, like objects in the real physical world.

This is sometimes referred to as the model being closed or "watertight",

though it is actually not related to whether the object would be

able to contain water or not; it refers to the solid parts being

completely encapsulated by the model-surface, without any holes that would

reveal that the model actually consists of an infinitely thin surface.

The picture from the introduction at the top of this page shows the same model reconstructed

with this technology from the same three photographs as used for the other two

examples. The generated models still contain a large number of polygons,

compared to the actual quality of the model, so we are working on both

improving the quality, as well as using fewer polygons.

The Work Continues

We are continuing development of CeX3D Inverse. The last two remaining points on CeX3D Inverse's critical TODO-list before alpha release (click here) still have to be completed. In addition to that, there are a couple of other issues that we would prefer to address, before releasing CeX3D Inverse to the public. We obviously did not get the first alpha release out in January or early February, as stated earlier. Instead, we are now aiming for a release in the beginning of May.

Follow the news page (click here) to see the latest status and achievements.

![]() Modified: 2015-04-12

Modified: 2015-04-12

E-mail: Contact